Ark APIs

Ark provides REST APIs for managing resources and executing queries.

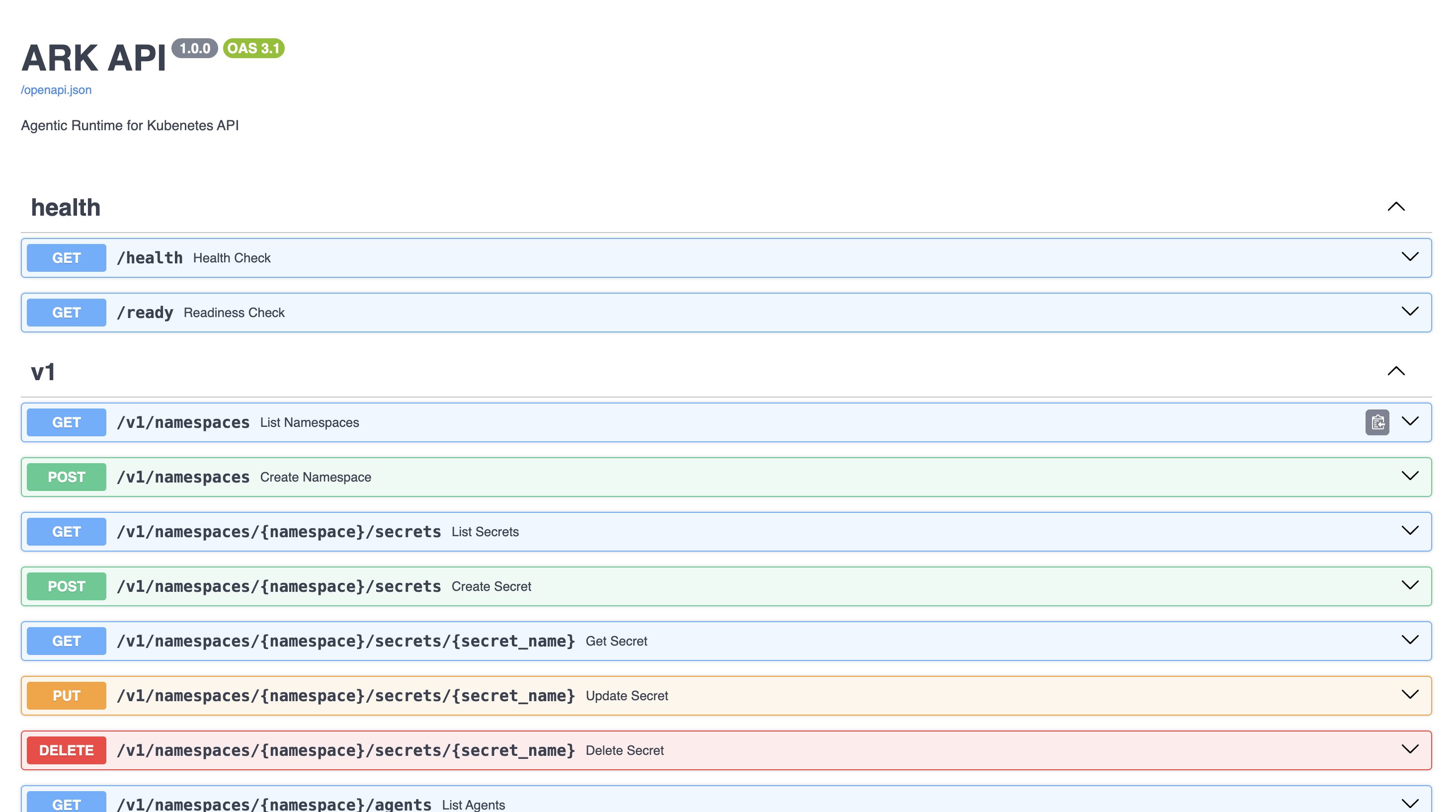

Interactive API Explorer

The easiest way to explore and test the APIs is through the built-in OpenAPI interface:

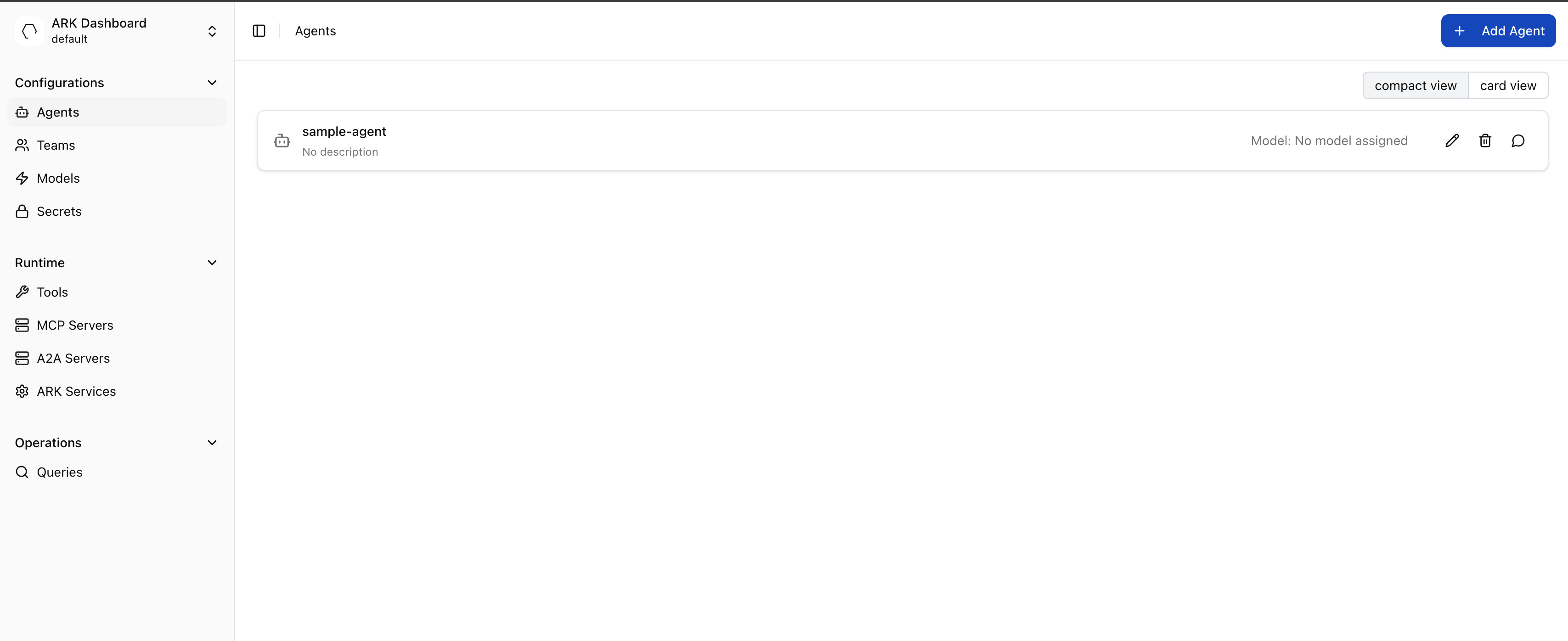

Start the dashboard with make dashboard or ark dashboard.

You can access the APIs using the ‘APIs’ link at bottom left of the dashboard screen:

You can also check the routes that have been setup with make routes - you will see the API routes such as ark-api.default.127.0.0.1.nip.io:8000.

You can open the API docs directly and interact with them through the path: http://ark-api.default.127.0.0.1.nip.io:8080/docs

Authentication

Ark APIs support multiple authentication methods depending on the AUTH_MODE configuration:

- OIDC/JWT: For dashboard users (when

AUTH_MODE=ssoorAUTH_MODE=hybrid) - API Keys: For service-to-service communication (when

AUTH_MODE=basicorAUTH_MODE=hybrid) - No Authentication: For development environments (when

AUTH_MODE=open)

Using API Keys

For programmatic access, create API keys and use HTTP Basic Authentication:

curl -X POST http://localhost:8000/v1/api-keys \

-H "Content-Type: application/json" \

-d '{"name": "My Service Key"}'

curl -u "pk-ark-xxxxx:sk-ark-xxxxx" \

http://localhost:8000/v1/namespaces/default/agentsSee the Authentication Guide for complete configuration details.

Starting the APIs

make ark-api-dev

docker run -p 8000:8000 ark-api:latestThe APIs will be available at http://localhost:8000.

Browse the complete API documentation at http://localhost:8000/docs when the service is running.

Resource APIs

Standard REST APIs for managing Ark resources:

- Agents:

/v1/namespaces/{namespace}/agents - Teams:

/v1/namespaces/{namespace}/teams - Queries:

/v1/namespaces/{namespace}/queries - Models:

/v1/namespaces/{namespace}/models - Secrets:

/v1/namespaces/{namespace}/secrets - API Keys:

/v1/api-keys(for service authentication) - Namespaces:

/v1/namespaces

Standard Kubernetes REST patterns are followed, such as POST to create, DELETE to delete.

Query API

Queries are the primary way to interact with Ark. Use POST /v1/queries/ to send input to any agent, team, model, or tool.

List Available Targets

See all available agents, teams, models, and tools:

Bash

curl http://localhost:8000/v1/agents/

curl http://localhost:8000/v1/models/

curl http://localhost:8000/v1/teams/

curl http://localhost:8000/v1/tools/Creating a Query

Send a query to any target:

Bash

curl -X POST http://localhost:8000/v1/queries/ \

-H "Content-Type: application/json" \

-d '{

"name": "my-query",

"type": "user",

"input": "Hello, how can you help me?",

"target": {"type": "agent", "name": "my-agent"}

}'Response:

{

"metadata": {

"name": "my-query",

"namespace": "default"

},

"spec": {

"type": "user",

"input": "Hello, how can you help me?",

"target": {"type": "agent", "name": "my-agent"}

},

"status": {

"phase": "done",

"response": {

"content": "Hello! How can I help you today?"

},

"conversationId": "conv-abc123"

}

}Query Fields

| Field | Required | Description |

|---|---|---|

name | Yes | Unique query name |

type | No | Input type, defaults to "user" |

input | Yes | The user message text |

target | Yes | Target with type (agent/model/team/tool) and name |

conversationId | No | Continue a previous conversation |

sessionId | No | Group queries for telemetry tracking |

timeout | No | Query timeout, e.g. "5m" |

metadata.annotations | No | Custom Kubernetes annotations |

Conversations

Use conversationId to maintain context across queries. The memory service manages conversation history automatically.

Python

import requests

url = "http://localhost:8000/v1/queries/"

first = requests.post(url, json={

"name": "conv-q1",

"input": "What is the capital of France?",

"target": {"type": "agent", "name": "my-agent"},

}).json()

conversation_id = first["status"]["conversationId"]

followup = requests.post(url, json={

"name": "conv-q2",

"input": "What is its population?",

"target": {"type": "agent", "name": "my-agent"},

"conversationId": conversation_id,

}).json()

print(followup["status"]["response"]["content"])The conversationId is returned in status.conversationId after the first query completes. Pass it to subsequent queries to continue the conversation. See Streaming Responses for how metadata is returned in streaming responses.

Custom Annotations

Pass custom Kubernetes annotations to the query resource via metadata.annotations:

curl -X POST http://localhost:8000/v1/queries/ \

-H "Content-Type: application/json" \

-d '{

"name": "annotated-query",

"input": "Hello",

"target": {"type": "agent", "name": "my-agent"},

"metadata": {

"annotations": {

"ark.mckinsey.com/a2a-context-id": "ctx-abc123",

"ark.mckinsey.com/streaming-enabled": "true"

}

}

}'Querying with Python

A complete example showing query creation, polling, authentication, and error handling:

import requests

from requests.auth import HTTPBasicAuth

base_url = "http://localhost:8000"

response = requests.post(

f"{base_url}/v1/queries/",

# Uncomment to use auth with key pair

# auth=HTTPBasicAuth("pk-ark-xxxxx", "sk-ark-xxxxx"),

headers={

"Content-Type": "application/json",

# Uncomment to use auth with bearer token

# "Authorization": "Bearer YOUR_TOKEN_HERE",

},

json={

"name": "python-query",

"type": "user",

"input": "Summarize the latest updates",

"target": {"type": "agent", "name": "my-agent"},

# Optional: continue a conversation

# "conversationId": "previous-conversation-id",

# Optional: session tracking

# "sessionId": "my-session-id",

# Optional: query timeout

# "timeout": "5m",

}

)

response.raise_for_status()

query = response.json()

print(query["status"]["response"]["content"])Querying Different Targets

The same query API works for agents, teams, models, and tools:

Agent

requests.post(url, json={

"name": "agent-query",

"input": "What's the weather like in New York?",

"target": {"type": "agent", "name": "weather-agent"},

})